-

Small and portable Integron

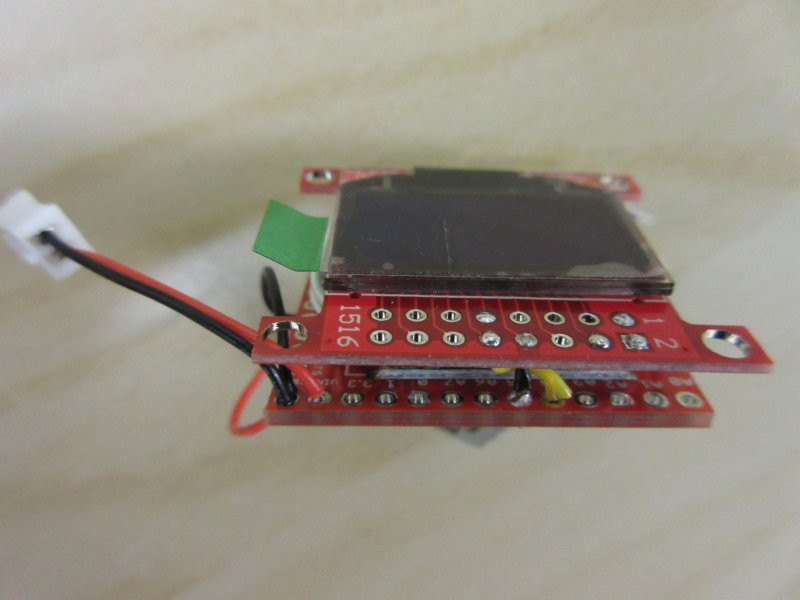

06/16/2014 at 17:38 • 0 commentsSometimes you need a little screen to tell you something you are interested in, that is occurring on your network.

With a tiny Arduino clone, a HopeRF transceiver, a small OLED screen, and a small LiPo battery, you can have an inexpensive position-aware output device that you can just put anywhere you need. It is totally standalone, and is "dumb" in that it is only focused on displaying what it is told to display. The Reactron network can address it wirelessly and push data to it. It uses statistically weighted triangulated RSSI to determine where it is in the room, since there are many other RF transceivers in the room. This has been good enough for my purposes.

OLED screen side. JST connector is for recharging the LiPO.

![]()

Reactron microcontroller side, HopeRF radio sandwiched in between.

![]()

I had taken to wearing one on my wrist to determine what was happening at places not near me, the idea being that I was near the things I was near, so I just needed information about things I was not near. The Processor behind this was one of the first implementations of a simple Recognizer, where the RSSI was effectively a Collector input. Later I wanted to leave a few of these lying near a thing I was interested in, so I need the opposite Processor - push data to the display that was concerned with the nearby thing going on. Then, I needed to tell these two usages apart, and could only do so statistically, so the Recognizer was born.

After that I realized I needed both things on one display. That is, data concerning the nearby thing, and anything anomalous or important happening with remote things, as a secondary display. That helped me to not be distracted with "checking", and then that became solidified as the main idea behind the ambient Integron as a passive (but active if you need it) interface device.

One thing on my list that I have not gotten to yet, is to make a nice case for these. Maybe out of NinjaFlex. They are small, and they are portable, but the OLED screens are delicate and they do crack when they fall to the floor.

-

Movers and shakers, Watts and wheels

06/14/2014 at 03:28 • 1 commentTwo auxiliary Reactron projects have been posted. Here and here. And links added on the side on this project.

They describe:

- A generalized material mover (though not much material, and not far.. but sometimes that is all you need), and

- An energizer - generalized power control. Not much more than a wirelessly controlled relay of 120VAC power.

I made these separate projects so that their components could be separately listed, rather than have a million components in one list with no organization. They are part of the Reactron network that this main project describes.

More of these auxiliary units will be posted as separate projects as well. The thing of note here is that they are all relatively simple nodes in a larger organization. Each devoted to a set of simple tasks, the combinations of which produce a rich complexity of results.

While I stated before that this main project would detail Integrons and Recognizers, I feel that there is one type of Integron for which I would like to make a separate project. That is the automobile Integron-Recognizer system. The reason to make it separate is that while it has all the same stuff as the regular room system, the situation is turned inside out in part.

That is, in a room you may have 4 or more “ambient” units scattered about for easy visual acquisition and good audio collecting coverage. All Collectors are pointed inwards, to get data from the confines of the room. In an automobile, you are more or less captive in your seat, so the burden is much less with respect to location. However, the automobile Integron must look outwards instead of inwards, or at least, in addition to inwards. And since the automobile is a moving node, there are other connectivity concerns. Since the automobile can be much more easily stolen than a building, there are different security concerns as well. While the general function of integrating and augmenting human activity is the same, there are sufficient practical and mechanical differences to warrant a separate accounting, in my opinion.

As a quick description now, the automobile Integron adds cameras for face recognition and more complex gesture control. A car may be unlocked from the outside as you approach, if your face is recognized and a certain (settable) gesture is made (OpenCV) . I’m too paranoid to use only a single criterion, even though face recognition is a pretty good statistical metric. Additional measures can be added such as biometric range processing (voice, ECG print, etc). Once inside the car, the normal voice control exists, and the mini-network in the car is connected to building-based nodes via data transfer over GPRS (or if parked within range, direct over RF). GPRS is a bit slower, but you then have the ability to ask your car if indeed you left your oven on, or left the front door unlocked, and if so, cause those things to be actuated to the state you actually desire - without having to drive back and lose however much time due to faulty human memory. I sometimes get distracted thinking about other things, and that is one of the reasons I built this system. I want the system to adapt to my thought flow. It is the computer upgrade to the ancient proverb: “There is no memory so good as faded ink”.) I wanted a design that reduced the occurrences of "Ah, if only i had ..." or "If I had thought of that at just that moment, it would have been so much easier".

My car also includes a mini-network of Reactrons other than the Integron-Recognizer unit. There is one for connecting to close-by building-based Reactron networks, and others to do actuations like door unlocking, managing an AC inverter, and monitoring the state of the car itself (e.g. all automated door actuations are prohibited by the Processor, when a Collector detects that the car is not in Park).

More to come…

-

Retrospective: Early Integrons

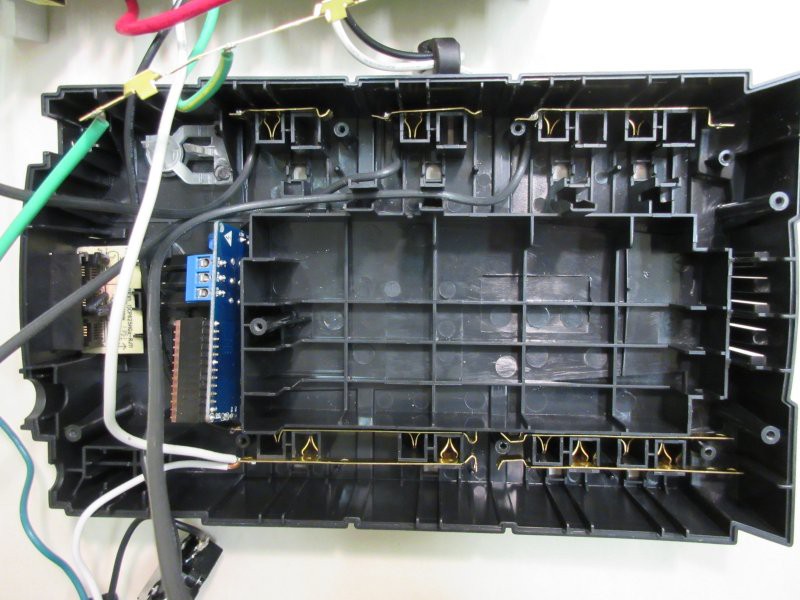

06/11/2014 at 14:16 • 0 commentsHere's a picture of one of the earliest Integrons. A very early Reactron is visible, it is a small Arduino clone with a HopeRF RFM12B transceiver. It is fitted to a wireless doorbell. The Arduino clone ran the code to integrate to my network. The doorbell provided bi-directional human interface - push the button (input), and hear a sound and light a small LED (output). Rudimentary, but it was the first step in a connected, human-integrating system.

![]()

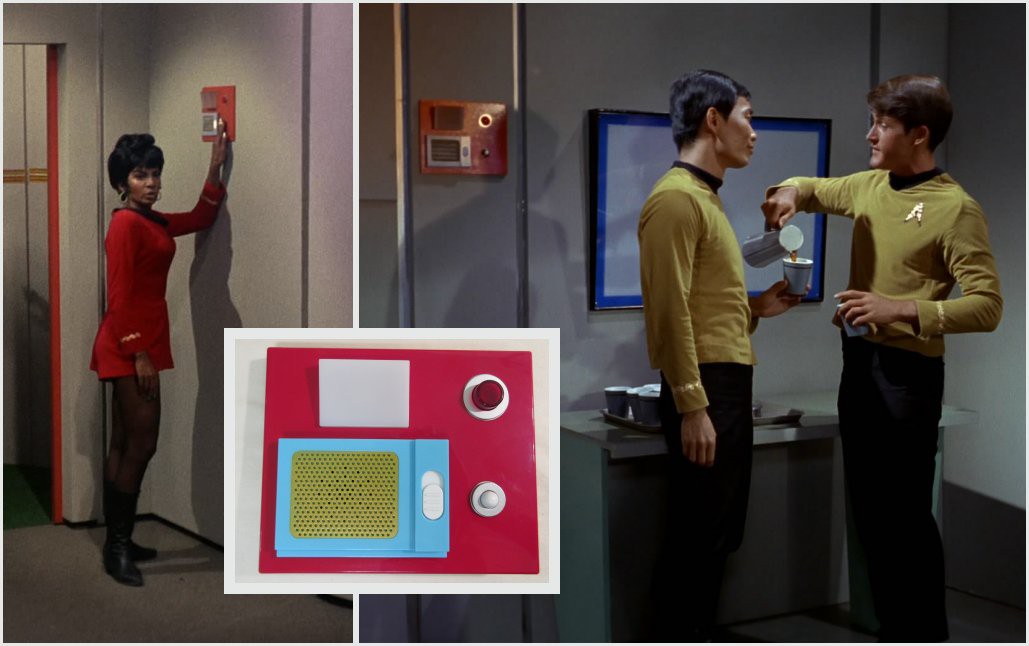

Here is a picture of a later, but still early, Integron. Originally a Star Trek door chime, it came with a control board, two PIR sensors, an LED, a few switches, and a speaker - with my upgrades it acted almost like the piece of ubiquitous hallway equipment on the show. The intent was to have an ever-present, "ambient" interface, even if the level of interface was just a light to be seen. Star Trek had it first! The people were always only a few steps away from one of these devices.

![]()

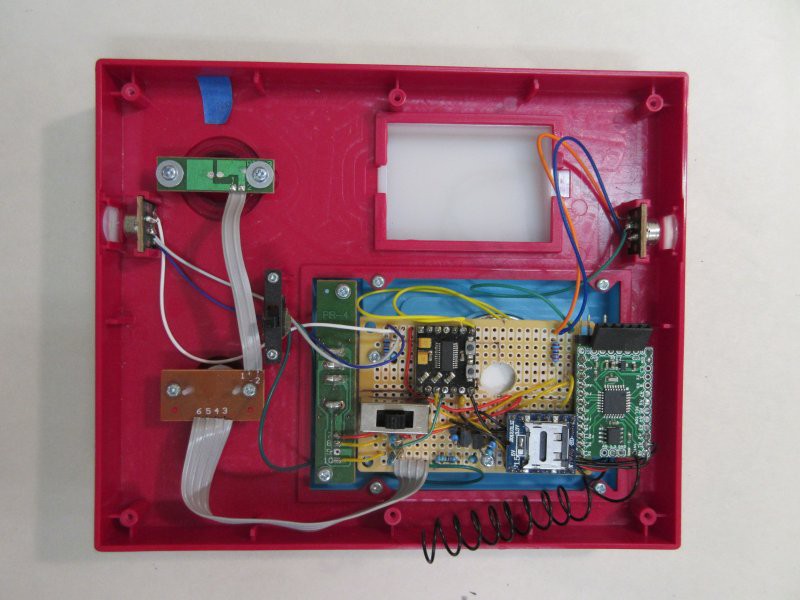

I removed the control board and replaced it with one of my own, with a WTV020-SD-16P module, which can play 512 different sound files, and a PAM8803 amplifier. The Arduino clone in this picture is the green board at the right, and on the reverse is a HopeRF transceiver - the coil is the antenna.

![]()

This was a more sophisticated human interface. It could detect movement and have its switches pressed as input, and could light up and/or play sounds for output. It was a nice set of components and form factor for the price. I later added a microphone and did a bunch of work on voice control using frequency analysis of voice samples. (I gave up on this eventually, due to too many false positives from ambient sound initiating actions I did not want, after the frequency analysis normalized and simplified the data. The ATMega328P is just not the right processor for speech analysis. Even when working flawlessly, it could only "recognize" some token words with known patterns to match against, a small set of static commands.)

The recorded sound files were initially a handful of signal noises, but then I added pre-recorded speech output like individual numbers and common words, so I could piece together meaningful voice responses, even if they were narrowly specific, and mostly numerical. I did have 512 slots, after all. This worked well enough, but required me to maintain the sound file store, and ultimately I wanted a bit more sophistication. I decided to make the jump to an SBC Linux board so I could run speech recognition as well as text to speech, for generalized voice input and output. I also wanted something nicer to look at than a bright red box on the wall, something with a more visible light and at least coarse gesture detection instead of merely motion detection.

-

Current state of Integrons

06/10/2014 at 00:57 • 0 commentsThis system is comprised of many different components. It's almost impossible to succinctly describe all the components in one project. Therefore, I will concentrate on the Integron and Recognizer components in this project, but I will establish separate projects to describe the sub-components.

Here are pictures of the current state of the Integron.

A single unit consists of Raspberry Pi with a microphone and speakers, not too surprising.

![]()

i have several of these units, and some use BeagleBone Black instead of RPi. I'm leaning that way for standardization.

Some of these units are connected to lights of this type, used as an annunciator:

![]()

I chose this light because I wanted something I liked to look at. The point is that these are continuously visible, so I thought they should be pleasant to look at. This is a 120VAC light so it just has a relay and is a binary on/off kind of signal for "there is something to pay attention to". (It did have a second level available, by blinking. Annoying, so stopped that.) This worked well in the initial stages of the system. Eventually though, I wanted just a little bit more granularity on understanding from the light, so I cobbled up some units with this light:

![]()

This is a $5 LED color orb. What is great about it is that it in order to change the battery, you unscrew the bottom and there is direct access to three LEDs, red, green, and blue. It was easy to remove the battery, bypass the control board, and just power the LEDs directly from RPi. With this, I could add color to the signal. As a test, I had one Processor scrape Yahoo finance for the state of the Dow Jones and just made it glow red if it was down, and green if up. It is part of the Integron because it interacts with me by being seen. This was much cheaper than the other light, so I was able to make several. And they are not unpleasant to look at (if they are not blinking).

I kind of liked the pyramid, though, so I thought about replacing the light source of that light with the LED base from the other.

In some of my units I tied a few PIR sensors in for coarse gesture recognition. Additionally, some units have an Atmel ATMega328P tied to a Hope RF transceiver to integrate with many small embedded wireless nodes that I have.

I've tried many configurations and I think I finally have a good complement for a standardized unit. I will make a custom indicator light that has speech recognition and coarse gesture recognition built in. A small screen will add a little more specificity to the unit, so that if I am close to it, I can check with text, what the light means. If I am not close, the light acts as an indicator that I transit visually in the general course of my life. No need to constantly check the smartphone, this is meant to present to me that which I deem important to me, in an ambient way.

This post was just about the integron unit. Currently, there are many Reactrons that are on the executing-an-action side of things. I will post about those separately.

-

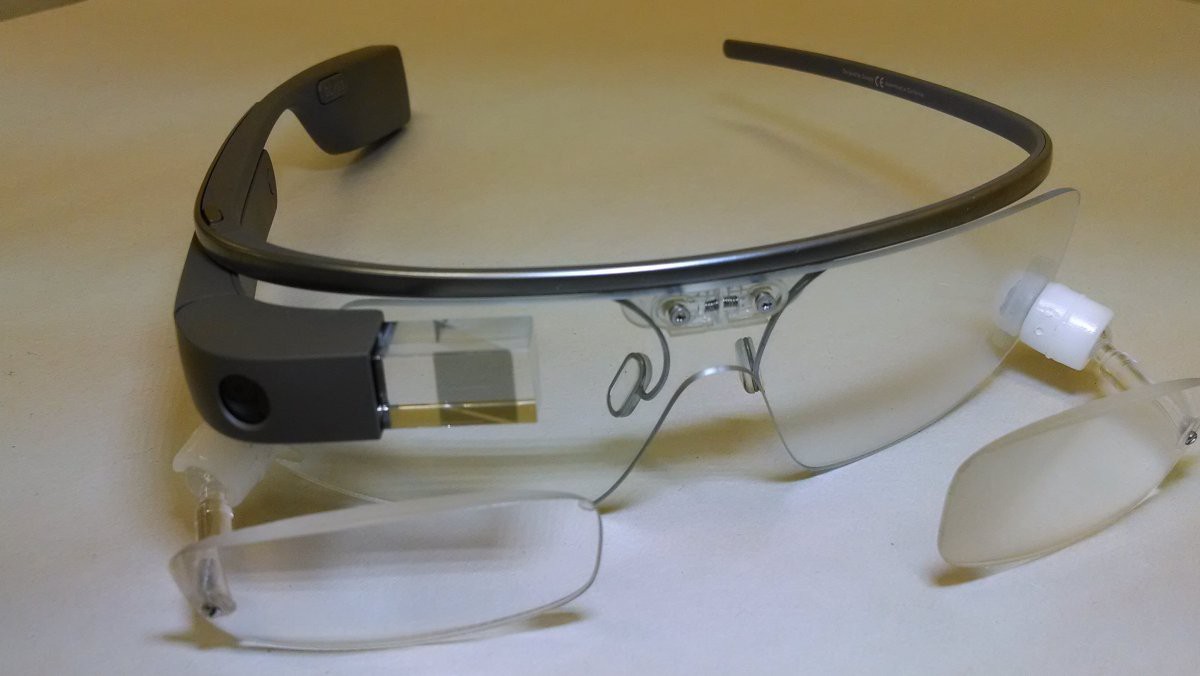

Integrated Google Glass

06/09/2014 at 23:00 • 0 commentsThis is my Google Glass.

![]()

It is integrated with the system as explained in the project details.

I did a mechanical hack to add swing-out and -away +4.00 diopter magnifiers for working on circuits and other small things.

One lens can swing over the camera in case I want to take a magnified photo.

I use this to augment my view digitally while working. I mostly use it as a display device - it has the benefit of being tied to my head so I do not have to avert my gaze from whatever I am concentrating on physically, in order to digest some additional digital information, like circuit diagrams or datasheets.

![]()

It seems to me that Raspberry Eye could also work here.

-

Project description clarified and updated

06/09/2014 at 21:03 • 0 commentsI have updated the project description.

The original description, preserved below, described what the system is, and the paradigms behind its structure. But it seemed maybe too high-level and perhaps did not convey everything I wanted, so I decided to write a new description focused instead on what the system does. And a bit on why I built the system.

I was concerned that the original description could have conveyed the sense that the system was some sort of parallel-processing scheme, basically a complex computer built from many simple ones. While that is in some ways true, that is really not the thrust nor the purpose of the system. Additionally, the system is not trying to outperform any benchmarks - it will work with slow hardware or fast hardware. The main standard is human response time, and even old machines are very fast by comparison. I have ten year old machines running on the system that work quite well. They may not be able to load a bloaty modern web app, but I don’t use them for that. It will be 2038 before they are obsolete - they will probably cease functioning for other reasons before then (I can’t tell you how many CPU cooling fans and electrolytic capacitors I have replaced...)

Original description:

DESCRIPTION

We view our tools as extensions of ourselves. This paradigm results in very complex machines as technology progresses. Complex things tend to be expensive, hard to maintain, and prone to failure or instability. The result has been less-than-optimal machine interfaces that waste our time and resources, and create unwanted dependencies.

Small computers are now sufficiently inexpensive to be ubiquitous, and sufficiently powerful to provide the minimum complexity to build a system using a different paradigm: very simple machines can be building blocks that form extremely complex and capable systems.

This project seeks to demonstrate a small system of machine units, that can produce a few concrete results in the physical human world. This requires a new machine dedicated to the communication with the user. All other system capabilities are provided by other individual units. Natural integration means minimal training, less frustration, and more time for humans to do human stuff.

DETAILS:

Human culture has produced a lot of amazingly complex things, from literature and art to science and technology. Humans are built of complex chemical structures with specialized behaviors. Enzymes and proteins flex and twist, react and interact to create each individual human, each a part of human culture. These complex chemicals are in turn made of atoms, relatively simple units with relatively few and simple rules of engagement and interaction.

The highest achievements of human culture may then be considered a consequence of the minimal essential complexity of atoms - things that obey a few rules, with a relatively simple and standardized interface. It is of note that the largest atoms, with the most electron shells, the largest number of possible isotopes, and the largest number of potential states, tend toward instability.

As a builder of machines, I am striving to leverage these natural laws. I have found that it is possible to build a large number of small and simple machines to produce quite complex results. I view the tools I build not as an extension of me, but rather as a “culture” of machine units, where each individual has a certain small set of capabilities. They can communicate very fast by human standards, and can assemble and provide me with a complex result that no single one of them could synthesize on its own. I call my reactive machine units "Reactrons". Overdrive is a condition philosophically related to critical mass, expressed in terms of gear ratio - here, it is meant to evoke the idea that we have enough units to produce wonderful results without pushing any single unit to its limit (and system instability).

People require two things to facilitate communications between two cultures: an ambassador and an interpreter. An ambassador provides an expression of intent and capabilities. An interpreter provides a communications protocol to successfully transmit these expressions. Efficient interaction and understanding between cultures can result. I will build a simple version of a machine ambassador/interpreter and a small culture of machines to support that interaction.

As new units are added, the entire system’s capabilities increase, and so does its utility, without having to reinvent the whole system at each step. Old machines are not discarded - as long as they can still do their small set of tasks, they are not rendered obsolete by the newest do-everything machine. The system as a whole is fault tolerant, it maintains continuity of data despite failure of individual units. But most importantly, the machines are built to understand people, and allow people to be themselves.

This is not simply a voice-controlled computer with speech synthesis. Many others have done this, and better than can I. Indeed, while those are components of the system, they are not required, and they are both replaceable and augmentable. This system uses inputs including voice and gesture, as well as passive data acquisition like collection of vital statistics, in order to promote a machine understanding of the humans involved with the system. There are other aspects as well, such as the persistence and ownership of data. I hope others improve on it and expand its capabilities - and for that, yes, it will all be open source.

There is a lot more detail behind this, including a mathematical system I refer to as interface wave function harmonics, and I will document those details for those that want to dig deeper into the founding principles of the design.

What is being built:

1) Multiple ATMega328P-based controllers that comprise the bulk of the Reactron system. These controllers will control things in the physical world, such as control points and power for a variety of existing appliances. Most of these controllers will have the ability to communicate via RF transceiver (currently using Hope RFM69W). I have used Roving Networks RN-171 in the past for direct TCP/IP connection, however I now find it more efficient to transmit via RF to other controllers that are bridged to an SBC Linux board that manages TCP/IP traffic. However, use of RN-171 is not mutually exclusive and may be included eventually.

2) Multiple human-integration points (Integrons) that will support speech recognition and speech synthesis. These points will be SBC Linux boards such as BBB and RPi. They will have a connected ATMega328P with RF transceiver, to connect and coordinate with the "physical world nodes" mentioned above. Integrons are designed to integrate and interface with humans, and humans have other senses than hearing and other capabilities than speech. For this project the integrons will be in the form of annunciator lights to give humans a quick visual status; speech recognition and synthesis and limited gesture recognition for medium-complexity interaction, and a small screen for (relatively) high-complexity one-way interaction. A keyboard would give high-complexity two way interaction, however we already have other machines for this, and the Integron is not designed to do everything, just a few things. (There will be a separate biometric input device to replace a keyboard, anyway.) The intent is to provide an unobtrusive and ubiquitous device that delivers 80% machine integration utility for 80% of the things one needs. If, however, you want to consume complex content, you can stare at a screen. If you want to produce content, you can work at a keyboard. Those things, we already have. Problem is, we use them for everything, leading to people swiping and staring into their smartphones for long periods of time while all the potential for beautiful human activity passes by.

The items in the first category can do things that are important to us in the physical world, without our interference, or in response to our commands. The items in the second category can render to us important information about the things that are occurring in the machine world, as well as deliver commands to that world. The two together can connect our electronic lives with our physical lives, and let our philosophical lives be free to be concerned with self-realization instead of machine maintenance.

Could you use this for home automation? Sure - but it is not a home automation system. Thermostats, lights, and door locks are just simple machines with control points. A sufficiently complex system can optimize simple rules for certain desired outputs like energy savings, or going the other way, maximum illumination to avoid seasonal affective disorder, or any of an infinite number of output variables humans can dream up. The Reactron system is more like "life automation", where home automation has a partial role. Additionally, since no single subsystem is considered permanent, units can come and go, either physically, or in and out of service, dynamically. This means the system follows the human, not the home, which is just another machine, and one that is becoming overly complex (but complex enough now, to be a part of a larger system of the future). This comment is no slight on home automation and its builders - it is a hard enough task, and necessary. I hope those systems ultimately expose small and simple APIs to integrate into the human world, without forcing us to be constantly at the smartphone helm. I hope to help. For now, I am just making a system that is friendly to "whatever you want". I will build some stuff I want, to demonstrate.

-

Blog established, a future vision posted

06/04/2014 at 21:50 • 0 commentsThis project is not so easy to describe, because it is really attempting to build the future, but we describe things using paradigms from the present, and hardware from the past (even if recent).

There's philosophy and math behind this, and the images and video will flow a bit later on. But in the meantime, I've established a separate place to discuss the philosophy and math, and I will re-write the description here to focus on the mechanics and applications. I think that makes the most sense. Cool stuff here, fine print there.

The blog at reactronoverdrive.com is sparse right now, but will get fleshed out soon.

In the meantime, I've posted a vision of a possible future, one I am trying to help build. I'm sure the real future will simply be unimaginable. But this post references the technological road from here to there, and some of the discussed technologies are already in existence, but need refinement and optimization, and cost reduction, but mostly just a system of synergistic integration, instead of the type of taxing integration we practice today.

Reactron Overdrive

A small but critical number of minimally complex machines interact with each other, providing machine augmentation of human activity.

Kenji Larsen

Kenji Larsen