-

Front page news

6 days ago • 0 commentsThis project recently hit Hackaday front page. So this seems a good time for a quick update, and maybe some clarifications.

What this project is

1. Research into ultra-low cost hardware for TinyML systems.

The motivation is to explore what is possible within an artificially constrained budget. And what are the implications on the software and ML side of the computational constraints such an environment has.

2. Testing grounds for the emlearn open-source software package. The software is mostly used on slightly more powerful microcontrollers, typically 0.5-5 USD for just the microcontroller, and similar amounts in sensors. But trying to scale down is a good torture test.

What this project is not

1. NOT a good starting point for getting into ML on microcontrollers and sensors (TinyML).

For that, I recommend getting much beefier hardware. Like an ESP32 with several megabytes of RAM and FLASH. That will be a lot more practical and fun. AdaFruit, Seed Studio, Sparkfun, Olimex etc all have good options. Arduino with Tensorflow Lite for Microcontrollers is probably the most practical software starting point still. I am working on MicroPython bindings for emlearn which has the goal to be super accessible. But that project is still in very early days.

2. NOT a ready-to-run board

Current rev0 boards have just been through basic HW bringup - with several critical problems for actual usage. But looks to be enough to continue testing on - which is all that matters for a rev0 board. A new board revision will come some time in the summer, after I have had time to test and develop some more. That might actually be usable, if we are lucky.

The BLE driver and firmware is also just skeletons at this point in time.News

CNN running on PY32. I have been testing running some Convolutional Neural Networks on Puya PY32. I was able to port TinyMaix successfully, and run a 3 layer CNN that takes 28x28 dimensional input. This complexity would be suitable for doing simple audio recognition - which is of interest in this project. However, it used 2 kB RAM and 25 kB of FLASH - leaving only 2 kB RAM and 7 kB FLASH for the rest of the system. That would be a tight squeeze... But they claim the AVR8 port used only 12 kB FLASH - so maybe it can be optimized down. To be investigated....

emlearn + MicroPython presentation at PyData Berlin. The slides are available. Video is to be published in the coming weeks, I believe.

Going to TinyML EMEA 2024 in Milano, Italy in June. I will be presenting about the emlearn TinyML software project. And maybe also a little bit about this hardware project :)

-

Audio input for 20 cents USD

02/25/2024 at 15:40 • 0 commentsTLDR: Using analog MEMS microphone with an analog opamp amplifier, it is possible to add audio processing to our sensor.

The added BOM cost for audio input is estimated to be 20 cents USD.

A two-stage amplifier with software selectable high/low gain is used to get the most of the internal microcontroller ADC.

The quality is not expected to be Hi-Fi, but should be enough for many practical Audio Machine Learning tasks.Ultra low cost microphones

The go-to options for a microphone for a microcontroller based system are digital MEMS (PDM/I2S/TDM protocl), analog MEMS, or analog elecret microphone.

The ultra low cost microcontrollers we have found, do not have pheripherals for decoding I2S or PDM. It is sometimes possible to decode I2S or PDM using fast interrupts/timers or a SPI pheriperal, but usually at quite some difficulty and CPU usage. Furthermore, the cheapest digital MEMS microphone we were able to find cost 66 cents. This is too large part of our 100 cent budget, so a digital MEMS microphone is ruled out.

Below are some examples of analog microphones that could be used. All prices are in quantity 1k, from LCSC.

MEMS analog. SMD mount

- LinkMEMS LMA2718T421-OA5 0.06 USD

- LinkMEMS LMA2718T421-OA1 0.08 USD

- Goertek S15OT421-005 0.09 USD

- CUI CMM-2718AT-42316-TR 0.47 USD

Analog elecret. Capsule

- INGHAi GMI6050 0.09 USD

- INGHAi GMI9767 0.09 USD

So there looks to be multiple options within our budget.

![]()

Example of MEMS analog microphones (from CUI)

The sensitivity of the MEMS microphones are typically -38 dBV to -42 dBV, and have noise floors of around 30-39 dB(A) SPL.Analog pre-amplifier

Any analog microphone will need to have an external pre-amplifier

to bring the output up to a suitable level for the ADC of the microcontroller.An opamp based pre-amplifier is the go-to solution for this. The requirements for a suitable opamp can be found using the guide in Analog Devices AN-1165, Op Amps for MEMS Microphone Preamp Circuits.

The key criteria, and their implications on opamp specifications, are as follows:

- achieve the neccessary gain (Gain Bandwidth Product) - not introduce noise (Input Noise Density)

- flat frequency response (Gain Bandwidth Product)

- not introducing too much distortion (Slew Rate, THD)

Furthermore, it must work at the voltages available in the system, typically 3.3V from a regulator, or 3.0-4.2V from Li-ion battery.

ADC considerations

The standard bit-depth for audio is 16 bit, or 24 bits for high-end audio. To cover the full audible range, the samplerate should be 44.1/48 kHz. However, for many Machine Learning tasks 16 kHz is sufficient. Speech is sometimes processed at just 8 kHz, so this can also be used.

Puya PY32V003 datasheet says specify power consumption at 750k samples per second. However, ADC conversion takes 12 cycles, and the ADC clock is only guaranteed to be 1 Mhz (typical is 4-8 Mhz). That would leave 83k samples per second in the worst case, which is sufficient for audio. In fact, we could use an oversampling ratio of 4x or more - if we have enough CPU capacity.

The ADC resolution is specified as 12 bits. This means a theoretical max dynamic range of 72 dB. However, some of the lower bits will be noise, reducing the effective bit-depth. Realistically, we are probably looking at an effective bitrate between 10 bit (60 dB) and 8 bit (42 dB). Practical sound levels at a microphone input vary quite a lot in practice. The sound sources of interest may vary a lot in loudness, and the distance from source to sensor also has a large influence. Especially for low dynamic range, this is a challenge: If the input signal is low, we will a have poor Signal to Noise Ratio, due to quantization and ADC noise. Or, if the input signal is high, we risk clipping due to maxing out the ADC.

Finding the gain

The gain is a critical parameter for amplifier design, as it influences almost all other requirements. If we look at speech as reference. Normal speech level at 3 meters is approximately 50 dB(A) SPL, and up to 90 dB(A) SPL for shouting up close. These are short-time average levels. And because the sound pressure is not constant, the max level (which system also needs to represent) is quite a lot higher.

Given a microphone with a sensitivity of -38 dBV, and allowing for 20 dB headroom, the ideal gains would be between 65 dB (1800x) and 25 dB (18x).

level preamp_gain 50.0 65.52 60.0 55.52 70.0 45.52 80.0 35.52 90.0 25.52 A two-stage amplifier with selectable gain

Intergrated Circuits for operational amplifiers come with either 1, 2, or 4 opamps. It turns out that a chip with 2 opamps can be had for basically the same price as 1. It is generally a good idea to split amplification into multiple stages, as this is less likely to hit the limits of the Gain Bandwidth Product of the opamp. However, in this case we can get another benefit which is more important: the ability to have two different gains. By providing them both to the microcontroller as separate ADC channels, we can switch between them in software. This can either be used statically in form of a high/low switch. Or it could be done dynamically by monitoring the inputs, as a very crude form for Automatic Gain Control (AGC).

![]()

Selecting the operational amplifier

Now we know all the parameters to select the opamp.

- Gain needed. Up to 40 dB / 100x (per stage).

- Bandwidth. Audio range, 20 kHz.

- Mic noise floor. -102 dBV

- Output voltage. 3.0V peak to peak

From this we can compute the key opamp specs. The equations are covered in the reference design guide from Analog Devices linked previously. We need something that has:

- Gain Bandwith Product (GBP). 2 Mhz

- Noise density. 20 nV/Hz

- Slew rate. 0.25 V/us

I reviewed a bunch of cheap opamps at LCSC, that can run on the relevant voltages. Their specifications can be seen in the following table:

cost current noise_density slewrate gbp part manufacturer LMV321IDBVR UMW 0.0290 0.06 27.0 0.52 1.00 TLV333 TI 0.2085 0.06 55.0 0.16 0.35 LM321LVIDBVR TI 0.0320 0.09 40.0 1.50 1.00 GS8621 Gainsil 0.0702 0.25 18.0 1.66 3.00 GS8721 Gailsil 0.0847 1.50 12.0 9.00 11.00 LMV721 Tokmas 0.0732 1.50 11.5 9.00 11.00 We see that the commodity low-cost, low-power LMV321 type chips are slightly out of spec, in both noise density and gain bandwidth product. The LMV721 class of devices have more-than-good enough performance. The GS8621 is a good alternative that has lower power consumption.

Audio input BOM

Microphone Goertek S15OT421-005 0.0888 USD

Opamp Gainsil GS8632 0.0789 USDTotal of 16 cents, rounding up to 20 cents with capacitors and resistors.

Next

Now that we have established that the hardware should be able to receive the audio,

we need to validate that we are able to process the audio signal with our rather weak microcontroller. That will be the topic of an upcoming post. -

Board bringup of first prototypes successful

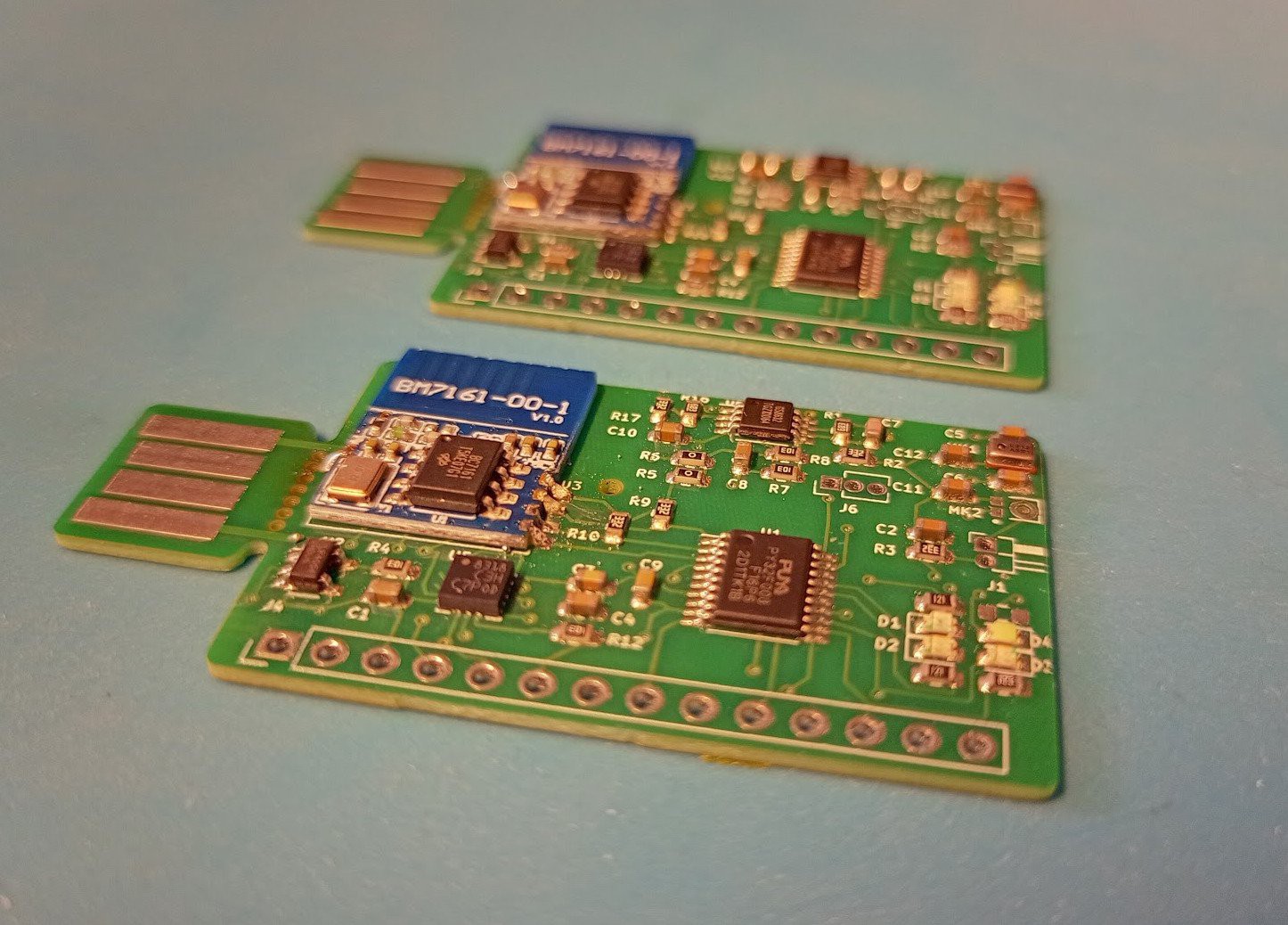

02/25/2024 at 12:25 • 0 commentsFirst prototype boards arrived this week.

![]()

In the weekend I did basic tests of all the subsystems:

- Charger/regulator. Voltage levels

- Microphone+amp. Gain, noise

- Microcontroller. Flashing, toggle GPIO pin - BLE module. I2C communication.

- Accelerometer. I2C communication

- LEDs. Blink

![Board bringup Board bringup]()

Board bringup - fun but messy

As always with a first revision, there are some issues here and there. But thankfully all of them have usable workarounds. So we can develop with this board.Examples of issues identified:

- LEDs are mounted the wrong way. Flip them or use external pins on header

- Battery charger 4.2v is too high for BLE module and MEMS mic. Use external 3.3v regulator

- MEMS mic did not work. Use external elecret mic

Next step will be to write some more firmware to validate more in detail that the board is functional. This includes:

- Driver for Holtek BC7161 BLE module (I2C)

- Driver for ST LIS3DH accelerometer (I2C)

- ADC readout for audio input

-

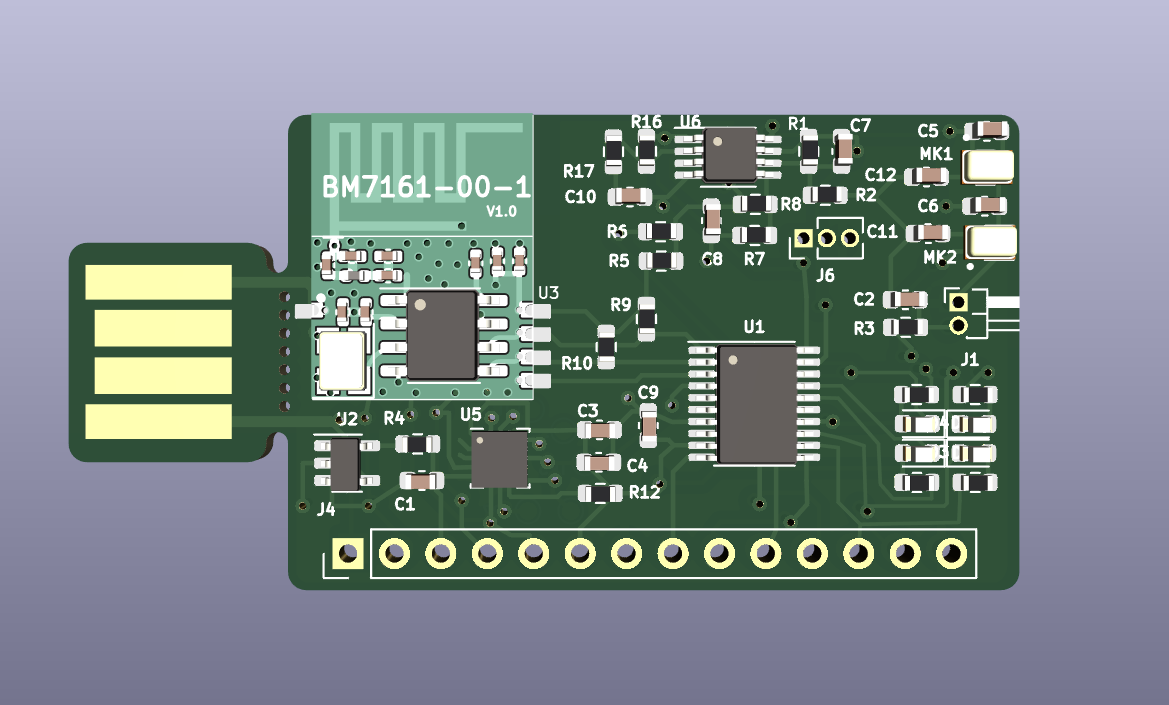

Development board sent to production

02/11/2024 at 11:09 • 0 commentsI made an initial development board. This supports both sound-based and accelerometer-based ML tasks. As well as using the LEDs as a color detector. So this is intended to be used to develop and validate the tech stack. And then further cost-optimization will happen with later revisions.

These are the key components- Microcontroller. Puya PY32F003

- BLE beacon transmitter

- Accelerometer. ST LIS3DH

- Microphones. Top-port or bottom-port MEMS, or external electret capsule

- Battery charger for LiPo/Li-Ion cells

- USB Type A connector for power/charge

Using a pre-built and FCC certified module for Bluetooth Low Energy, the Holtek BM7161.

This is a simple module based around the low cost BC7161 chip.An initial batch of 10 boards have been ordered from JLCPCB.

![]()

Also did a check of the BOM costs. At 200 boards, the components except for passives cost- With microphone: 0.66 USD per board

- With accelerometer: 0.825 USD per board

Additionally, there are around 20 capacitors, 1 small inductor, and 20 resistors needed.

This is estimated to be between 0.15 - 0.20 USD per board.

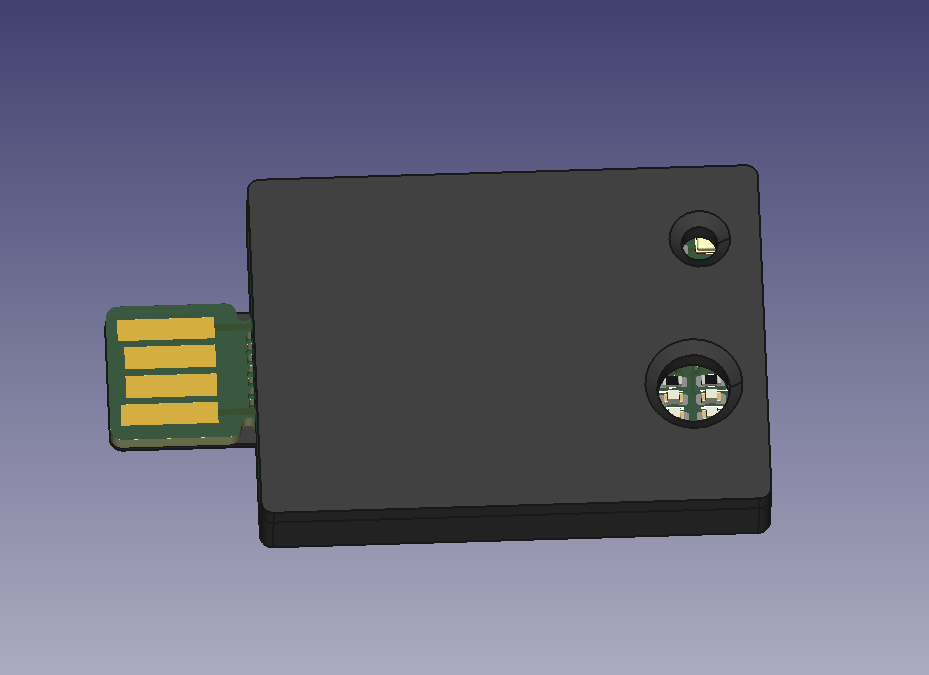

So it looks feasible to get below the 1 USD target BOM, for as low as 200 boards.Also designed a small 3d-printed case, with holes for the microphone and LEDs / light sensor.

![]()

-

Activity Recognition using accelerometer with tree-based ML models

01/21/2024 at 22:33 • 0 commentsSummary/TLDR

This looks to just-barely-doable on the chosen microcontroller (4 kB RAM and 32 kB FLASH).

Expected RAM usage is 0.5 kB to 3.0 kB, and FLASH between 10 kB to 32 kB FLASH.

There are accelerometers available that add 20 to 30 cents USD to the Bill of Materials.

Random Forest on time-domain features can do a good job at Activity Recognition.

The open-source library emlearn has efficient Random Forest implementation for microcontrollers.Applications of Activity Recognition

The most common sub-task for Activity Recognition using accelerometers is Human Activity Recognition (HAR). It can be used for Activities of Daily Living (ADL) recognition such as walking, sitting/standing, running, biking etc. This is now a standard feature on fitness watches and smartphones etc.

But there are ranges of other use-cases that are more specialized. For example:

- Tracking sleep quality (calm vs restless motion during sleep)

- Detecting exercise type counting repetitions

- Tracking activities of free-roaming domestic animals

- Fall detection etc as alerting system in elderly care

And many, many more. So this would be a good task to be able to do.

Ultralow cost accelerometers

To have a sub 1 USD sensor that can perform this task, we naturally need a very low cost accelerometer.

Looking at LCSC (in January 2024), we can find:

- Silan SC7A20 0.18 USD @ 1k

- ST LIS2DH12 0.26 USD @ 1k

- ST LIS3DH 0.26 USD @ 1k

- ST LIS2DW12 0.29 @ 1k

The Silan SC7A20 chip is said to be a clone of LIS2DH.

So there looks to be several options in the 20-30 cent USD range.

Combined with a 20 cent microcontroller, we are still below 50% of our 1 dollar budget.Resource constraints

It seems that our project will have a 32-bit microcontroller with around 4 kB RAM and 32 kB FLASH (such as the Puya PY32F003x6). This sets the constraints that our entire firmware needs to fit inside. The firmware needs to collect data from the sensors, process the sensor data, run the Machine Learning model, and then transmit (or store) the output data. Would like to use under 50% of RAM and FLASH for buffers and for model combined, so under 2 kB RAM and under 16 kB FLASH.

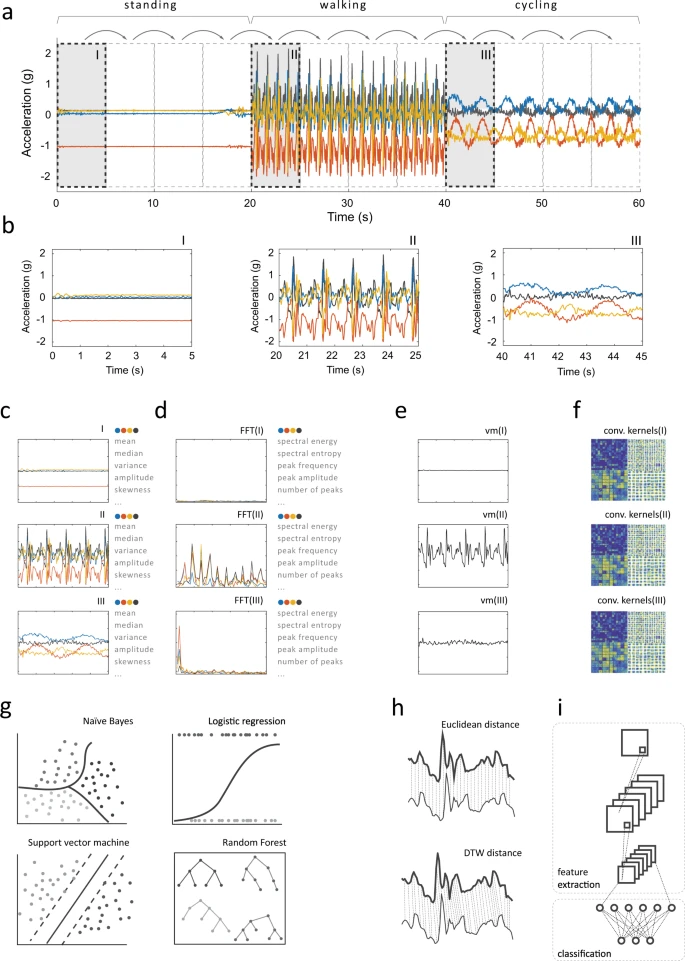

Overall system architecture

We are considering an ML architecture where accelerometer samples are collected into fixed-length windows (typically a few seconds long) that are classified independently. Simple features are extracted from each of the windows, and a Random Forest is used for classification. The entire flow is illustrated in the following image, which is from A systematic review of smartphone-based human activity recognition methods for health research.

![]()

This kind of architecture was used for in the paper Are Microcontrollers Ready for Deep Learning-Based Human Activity Recognition? The paper shows that it is possible to perform similarly to a deep-learning approach, but with resource usage that are 10x to 100x better. They were able to run on Cortex-M3, Cortex-M4F and Cortex M7 microcontrollers with at least 96 kB RAM and 512 kB FLASH. But we need to fit into 5% of that resource budget...

RAM needs for data buffers

The input buffers, intermediate buffers, tends to take up a considerable amount of RAM. So an appropriate tradeoff between sampling rate, precision (bit width) and length (in time) needs to be found. Because we are continiously sampling and also processing the data on-the-run, double-buffering may be needed. In the following table, we can see the RAM usage for input buffers to hold the sensor data from an accelerometer. The first two configurations were used in the previously mentioned paper:

samples size percent buffers channels bits samplerate duration 2.00 3 16 100 1.28 128 1536 37.5% 2.56 256 3072 75.0% 8 50 1.28 64 384 9.4% 2.56 128 768 18.8% 1.25 3 8 50 2.56 128 480 11.7% 16 bit is the typical full range of accelerometers, so it preserves all the data. It may be possible to reduce this down to 8 bit with sacrificing much performance. This can be done by scaling the data linearly, or implementing a non-linear transform such as square-root or logarithm to reduce the range of values needed.

Using 50 Hz sampling rate would also be very beneficial to reduce RAM usage. Assuming that feature processing is quite fast, it should also be possible to not use full double-buffering. It may also be possible to keep a buffer of computed features (much smaller in size) for the windows and classify them together. This would allow reducing the window size, but maintain information from a similar amount of time in order to keep performance up.

So it seems feasible to find a configuration under 2 kB RAM that has good performance.

Feature extraction

In the previously referenced paper, they used 9 features. These compute simple statistics directly on each window.

No FFT or similar heavy processing is used. This should have a negligible RAM (under 256 bytes) and FLASH usage (under 5kB).Random Forest classifier, FLASH requirements

The previously mentioned paper tested using 10-100 trees, with a max_depth of 9. The authors found that more than 50 trees gave a marginal improvements in F1 score. They reported 10 trees used 10kB FLASH, and 50 trees to be around 50 kB FLASH.

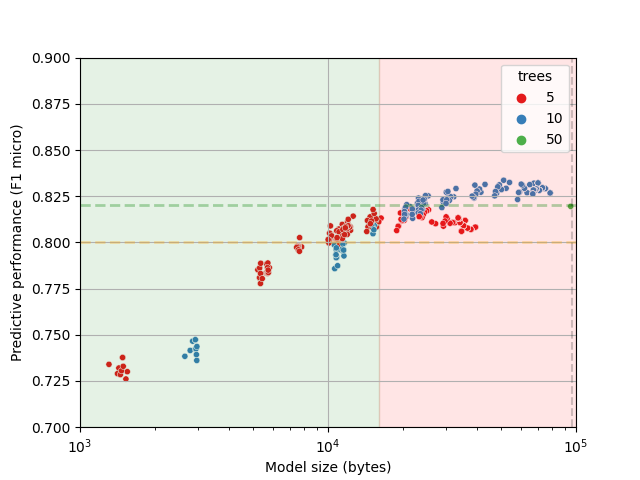

However, it does not appear that they did any hyperparameter optimization to find smaller models. Therefore, I forked their git repository with the experiments and added my own tuning. I varied by the depth of the trees and the number of features per tree, in order to see if a smaller amount of trees. Both reducing number of trees and the depth helps to reduce model size. The code can be found at https://github.com/jonnor/feature-on-board-activities, and results are in the following image:

![]()

The original performance (50 trees, max_depth=9) is marked with a green dot (on the right side). We can see that 5 trees can just barely hit the same performance levels, and that 10 trees is able to improve the performance. And that the model size can be 4-10x smaller with no or marginal degradation in performance.The size estimates in the plot are for the "loadable" inference strategy in emlearn. Benchmarks show that when using the "inline" inference strategy with 8-bit integers, then model size is approximately half. So the models that take 20 kB in the plot should in practice take around 10 kB.

At 10 kB these models are able to match the performance of the untuned model (which matched the deep-learning baselines), and fit in our 16 kB FLASH budget.

-

Ultra low cost microcontrollers

01/20/2024 at 01:01 • 0 commentsIf the complete BOM for sensor is to be under 1 USD, the microcontroller needs to be way below this. Preferably below 25% in order to leave budget for sensors, power and communication.

Thankfully, there have been a lot of improvements in this area over the last years. Looking at LCSC.com, we can find some interesting candidates:

- ST STM32G030F6P6. 32 kB FLASH / 8 kB RAM. `0.30 USD @ 1k`

- WCH CH32V003. RISC-V. 16KB FLASH / 2KB RAM. 48MHz QFN-20. `0.15 USD @ 1k`

- Puya PY32F003x6. 4 kB RAM / 32 kB FLASH. `0.13 USD @ 1k`.

- Puya PY32F002. Cortex M0+. 20 Kb FLASH / 3 kB RAM. 24 Mhz `0.10 USD @ 1k`

- Padauk PFS154. 2 kB FLASH / 128 bytes of RAM, `0.06 USD @ 1k`

- Fremont Micro Devices FMD FT60F011A-RB. 1kB FLASH / 64 bytes RAM. `0.06 USD @ 1k`.

There are also a very few sub-1 USD microcontrollers that have integrated wireless connectivity.

- WCH CH582F. Bluetooth Low Energy. `0.68 USD @ 1k`

Implications for 1 dollar TinyML project

It looks like if we budget 10-20 cents USD to the microcontroller, then we get around:

- 16-20 kB FLASH

- 2-4 kB RAM

- 24-48 Mhz clock speed

- 32 bit CPU

- No floating-point unit (FPU)

At this price point the WCH CH32V003 or the Puya PY32F003x6 look like the most attractive options. Both have decent support in the open community. WCH CH32 can be targetted with cnlohr/ch32v003fun and Puya with py32f0-template.

What kind of ML tasks can we manage to perform on such a small CPU? That is the topic for the next steps.

Jon Nordby

Jon Nordby